Having looked at the foundations of the storage technology that Cinder uses in the previous posts, we are now ready to explore the basic architecture of Cinder and install Cinder in our playground.

Cinder architecture

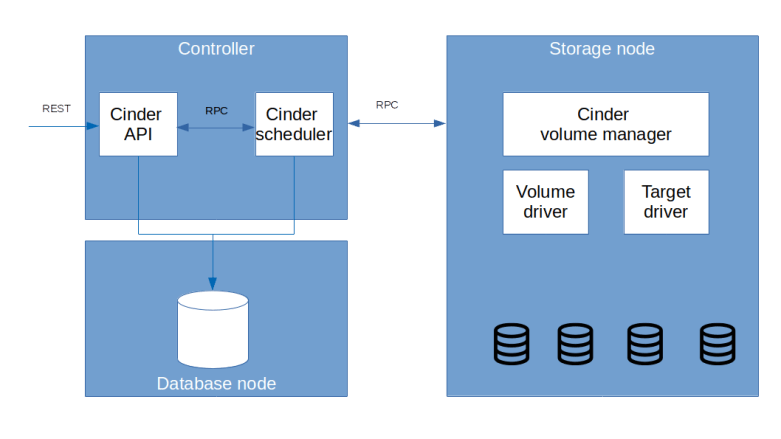

Essentially, Cinder consists of three main components which are running as independent processes and typically on different nodes.

First, there is the Cinder API server cinder-api. This is a WSGI-server running inside of Apache2. As the name suggests, cinder-api is responsible for accepting and processing REST API requests from users and other components of OpenStack and typically runs on a controller node.

Then, there is cinder-volume, the Cinder volume manager. This component is running on each node to which storage is attached (“storage node”) and is resonsible for managing this storage, i.e. preparing, maintaining and deleting virtual volumes and exporting these volumes so that they can be used by a compute node. And finally, Cinder comes with its own scheduler, which directs requests to create a virtual volume to an appropriate storage node.

Of course, Cinder also has its own database and communicates with other components of OpenStack, for example with Keystone to authenticate requests and with Glance to be able to create volumes from images.

Cinder van use a variety of different storage backends, ranging from LVM managing local storage directly attached to a storage node over other standards like NFS and open source solutions like Ceph to vendor-specific drivers like Dell, Huawei, NetApp or Oracle – see the official support matrix for a full list. This is achieved by moving low-level access to the actual volumes into a volume driver. Similarly, Cinder uses various technologies to connect the virtual volumes to the compute node on which an instance that wants to use it is running, using a so-called target driver.

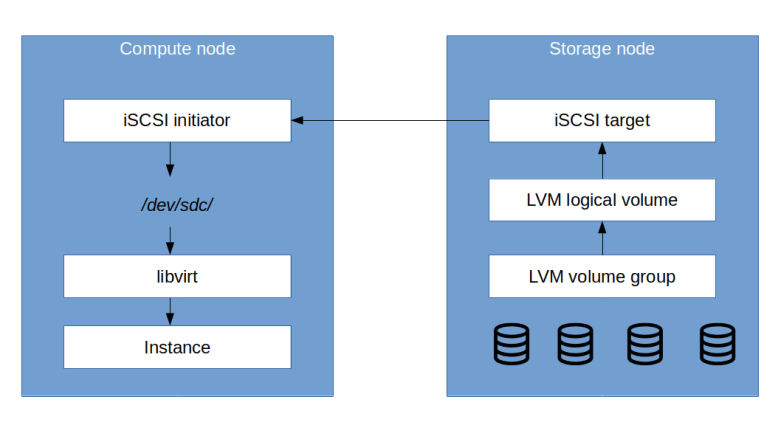

To better understand how Cinder operates, let us take a look at one specific use case – creating a volume and attaching to an instance. We will go in more detail on this and similar use cases in the next post, but what essentially happens is the following:

- A user (for instance an administrator) sends a request to the Cinder API server to create a logical volume

- The API server asks the scheduler to determine a storage node with sufficient capacity

- The request is forwarded to the volume manager on the target node

- The volume manager uses a volume driver to create a logical volume. With the default settings, this will be LVM, for which a volume group and underlying physical volumes have been created during installation. LVM will thzen create a new logical volume

- Next, the administrator attaches the volume to an instance using another API request

- Now, the target driver is invoked which sets up an iSCSI target on the storage node and a LUN pointing to the logical volume

- The storage node informs the compute node about the parameters (portal IP and port, target name) that are needed to access this target

- The Nova compute agent on the compute node invokes an iSCSI initiator which maps the iSCSI target into the local file system

- Finally, the Nova compute agent asks the virtual machine driver (libvirt in our case) to attach this locally mapped device to the instance

Installation steps

After this short summary of the high-level architecture of Cinder, let us move and try to understand how the installation process looks like. Again, the installation follows the standard pattern that we have now seen so many times.

- Create a database for Cinder and prepare a database user

- Add user, services and endpoints in Keystone

- Install the Cinder packages on the controller and the storage nodes

- Modify the configuration files as needed

In addition to these standard steps, there are a few points specific for Cinder. First, as sketched above, Cinder uses LVM to manage virtual volumes on the storage nodes. We therefore need to perform a basic setup of LVM on each storage node, i.e. we need to create physical volumes and a volume group. Second, every compute node will need to act as iSCSI initiator and therefore needs a valid iSCSI initiator name. The Ubuntu distribution that we use already contains the Open-iSCSI package, which maintains an initiator name in the file /etc/iscsi/initiatorname.iscsi. However, as this name is supposed to be unique, it is not set in the Ubuntu Vagrant image and we need to do this once during the installation by running the script /lib/open-iscsi/startup-checks.sh as root.

There is an additional point that we need to observe which is also not mentioned in the official installation guide for the Stein release. When installing Cinder, you have to define which network interface Cinder will use for the iSCSI traffic. In production, you would probably want to use a separate storage network, but for our setup, we use the management network. According to the installation guide, it should be sufficient to set the configuration item my_ip, but in reality, this did not work for me and I had to set the item target_ip_address on the storage nodes.

Lab13: running and testing the Cinder installation

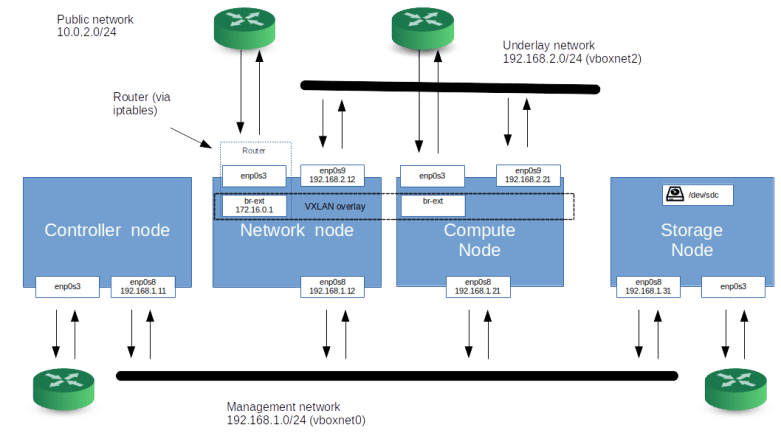

Time to try this out and set up a lab for that. The first thing we need to do is to modify our Vagrantfile to add a storage node. In order to reduce memory consumption a bit, we instead move from having two computes to one compute node only, so that our new setup looks as follows.

As mentioned above, we will not use a dedicated storage network, but send the iSCSI traffic over the management network so that our network setup remains essentially unchanged. To bring up this scenario and to run the Cinder installation plus a demo setup do the following.

git clone https://github.com/christianb93/openstack-labs cd openstack-labs/Lab13 vagrant up ansible-playbook -i hosts.ini site.yaml ansible-playbook -i hosts.ini demo.yaml

When all this completes, we can run some tests. First, verify that all storage nodes are up and running. For that purpose, log into the controller node and use the OpenStack CLI to retrieve a list of all recognized storage nodes.

vagrant ssh controller source admin-openrc openstack volume service list

The output that you get should look similar to the sample output below.

+------------------+-------------+------+---------+-------+----------------------------+ | Binary | Host | Zone | Status | State | Updated At | +------------------+-------------+------+---------+-------+----------------------------+ | cinder-scheduler | controller | nova | enabled | up | 2020-01-01T14:03:14.000000 | | cinder-volume | storage@lvm | nova | enabled | up | 2020-01-01T14:03:14.000000 | +------------------+-------------+------+---------+-------+----------------------------+

We see that there are two services that have been detected. First, there is the Cinder scheduler running on the controller node, and then, there is the Cinder volume manager on the storage node. Both are “up”, which is promising.

Next, let us try to create a volume and attach it to one of our test instances, say demo-instance-3. For this to work, you need to be on the network node (as we will have to establish connectivity to the instance).

vagrant ssh network source demo-openrc openstack volume create \ --size=1 \ demo-volume openstack server add volume \ demo-instance-3 \ demo-volume openstack server show demo-instance-3 openstack server ssh \ --identity demo-key \ --login cirros \ --private demo-instance-3

You should now be inside the instance. When you run lsblk, you should find a new block device /dev/vdb being added. So our installation seems to work!

Having a working testbed, we are now ready to dive a little deeper into the inner workings of Cinder. In the next post, we will try to understand some of the common use cases in a bit more detail.

2 Comments