In my previous post, we have seen how the libvirt toolset can be used to directly create virtual volumes, virtual networks and KVM virtual machines. If this is not the first time you visit my post, you will know that I am a big fan of automation, so let us investigate today how we can use Ansible to bring up KVM domains automatically.

Using the Ansible libvirt modules

Fortunately, Ansible comes with a couple of libvirt modules that we can use for this purpose. These modules mainly follow the same approach – we initially create objects from an XML template and then can perform additional operations on them, referencing them by name.

To use these modules, you will have to install a couple of additional libraries on your workstation. First, obviously, you need Ansible, and I suggest that you take a look at my short introduction to Ansible if you are new to it and do not have it installed yet. I have used version 2.8.6 for this post.

Next, Ansible of course uses Python, and there are a couple of Python modules that we need. In the first post on libvirt, I have already shown you how to install the python3-libvirt package. In addition, you will have to run

pip3 install lxml

to install the XML module that we will need.

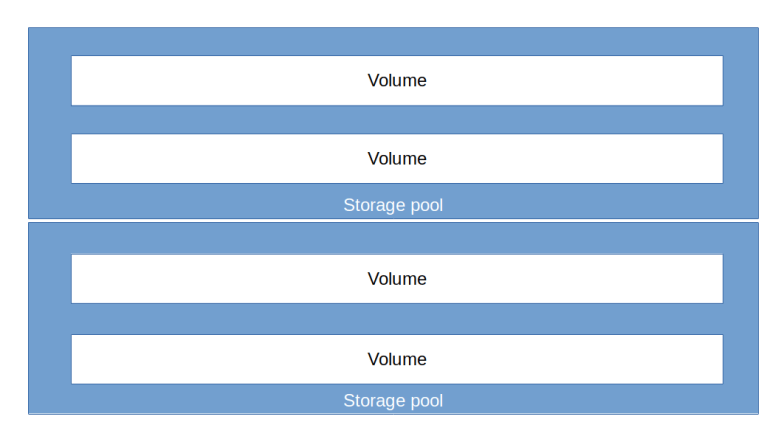

Let us start with a volume pool. In order to somehow isolate our volumes from other volumes on the same hypervisor, it is useful to put them into a separate volume pool. The virt_pool module is able to do this for us, given that we provide an XML definition of the pool which we can of course create from a Jinja2 template, using the name of the volume pool and its location on the hard disk as parameter.

At this point, we have to define where we store the state of our configuration, consisting of the storage pool, but also potentially SSH keys and the XML templates that we use. I have decided to put all this into a directory state in the same directory where the playbook is kept. This avoids polluting system- or user-wide directories, but also implies that when we eventually clean up this directory, we first have to remove the storage pool again in libvirt as we otherwise have a stale reference from the libvirt XML structures in /etc/libvirt into a non-existing directory.

Once we have a storage pool, we can create a volume. Unfortunately, Ansible does not (at least not at the time of writing this) have a module to handle libvirt volumes, so a bit of scripting is needed. Essentially, we will have to

- Download a base image if not done yet – we can use the get_url module for that purpose

- Check whether our volume already exists (this is needed to make sure that our playbook is idempotent)

- If no, create a new overlay volume using our base image as backing store

We could of course upload the base image into the libvirt pool, or, alternatively, keep the base image outside of libvirts control and refer to it via its location on the local file system.

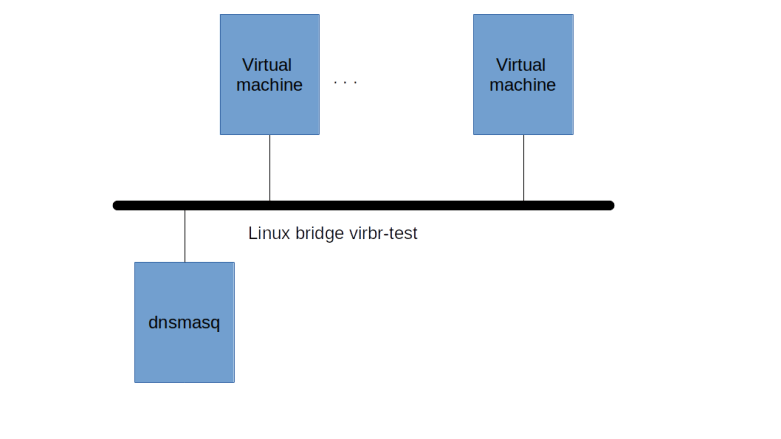

At this point, we have a volume and can start to create a network. Here, we can again use an Ansible module which has idempotency already built into it – virt_net. As before, we need to create an XML description for the network from a Jinja2 template and hand that over to the module to create our network. Once created, we can then refer to it by name and start it.

Finally, its time to create a virtual machine. Here, the virt module comes in handy. Still, we need an XML description of our domain, and there are several ways to create that. We could of course built an XML file from scratch based on the specification on libvirt.org, or simply use the tools explored in the previous post to create a machine manually and then dump the XML file and use it as a template. I strongly recommend to do both – dump the XML file of a virtual machine and then go through it line by line, with the libvirt documentation in a separate window, to see what it does.

When defining the virtual machine via the virt module, I was running into error messages that did not show up when using the same XML definition with virsh directly, so I decided to also use virsh for that purpose.

In case you want to try out the code I had at this point, follow the instructions above to install the required modules and then run (there is still an issue with this code, which we will fix in a minute)

git clone https://github.com/christianb93/ansible-samples cd ansible-samples cd libvirt git checkout origin/v0.1 ansible-playbook site.yaml

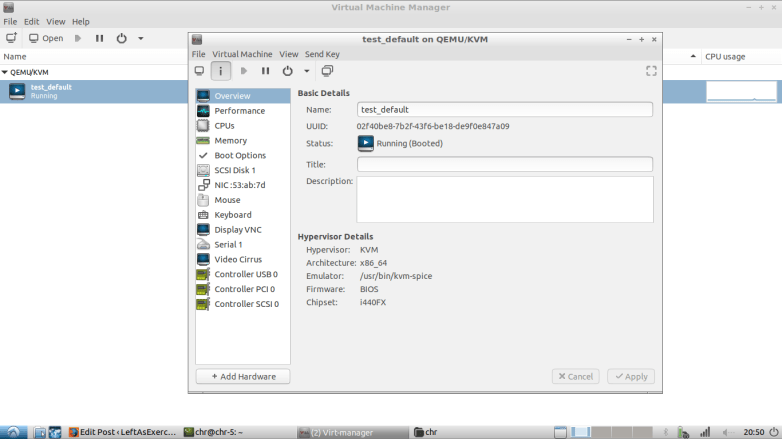

When you now run virsh list, you should see the newly created domain test-instance, and using virt-viewer or virt-manager, you should be able to see its screen and that it boots up (after waiting a few seconds because there is no device labeled as root device, which is not a problem – the boot process will still continue).

So now you can log into your machine…but wait a minute, the image that we use (the Ubuntu cloud image) has no root password set. No problem, we will use SSH. So figure out the IP address of your domain using virsh domifaddr test-instance…hmmm…no IP address. Something is still wrong with our machine, its running but there is no way to log into it. And even if we had an IP address, we could not SSH into it because there is SSH key.

Apparently we are missing something, and in fact, things like getting a DHCP lease and creating an SSH key would be the job of cloud-init. So there is a problem with the cloud-init configuration of our machine, which we will now investigate and fix.

Using cloud-init

First, we have to diagnose our problem in a bit more detail. To do this, it would of course be beneficial if we could log into our machine. Fortunately, there is a great tool called virt-customize which is part of the libguestfs project and allows you to manipulate a virtual disk image. So let us shut down our machine, add a root password and restart the machine.

virsh destroy test-instance sudo virt-customize \ -a state/pool/test-volume \ --root-password password:secret virsh start test-instance

After some time, you should now be able to log into the instance as root using the password “secret”, either from the VNC console or via virsh console test-instance.

Inside the guest, the first thing we can try is to run dhclient to get an IP address. Once this completes, you should see that ens3 has an IP address assigned, so our network and the DHCP server works.

Next let us try to understand why no DHCP address was assigned during the boot process. Usually, this is done by cloud-init, which, in turn, is started by the systemd mechanism. Specifically, cloud-init uses a generator, i.e. a script (/lib/systemd/system-generators/cloud-init-generator) which then dynamically creates unit files and targets. This script (or rather a Jinja2 template used to create it when cloud-init is installed) can be found here.

Looking at this script and the logging output in our test instance (located at /run/cloud-init), we can now figure out what has happened. First, the script tries to determine whether cloud-init should be enabled. For that purpose, it uses the following sources of information.

- The kernel command line as stored in /proc/cmdline, where it will search for a parameter cloud-init which is not set in our case

- Marker files in /etc/cloud, which are also not there in our case

- A default as fallback, which is set to “enabled”

So far so good, cloud-init has figured out that it should be enabled. Next, however, it checks for a data source, i.e. a source of meta-data and user-data. This is done by calling another script called ds-identify. It is instructive to re-run this script in our virtual machine with an increased log level and take a look at the output.

DEBUG=2 /usr/lib/cloud-init/ds-identify --force cat /run/cloud-init/ds-identify.log

Here we see that the script tries several sources, each modeled as a function. An example is the check for EC2 metadata, which uses a combination of data like DMI serial number or hypervisor IDs with “seed files” which can be used to enforce the use a data source to check whether we are running on EC2.

For setups like our setup which is not part of a cloud environment, there is a special data source called the no cloud data source. To force cloud-init to use this data source, there are several options.

- Add the switch “ds=nocloud” to the kernel command line

- Fake a DMI product serial number containing “ds=nocloud”

- Add a special marker directory (“seed directory”) to the image

- Attach a disk that contains a file system with label “cidata” or “CIDATA” to the machine

So the first step to use cloud-config would to actually enable it. We will choose the approach to add a correctly labeled disk image to our machine. To create such a disk, there is a nice tool called cloud_localds, but we will do this manually to better understand how it works.

First, we need to define the cloud-config data that we want to feed to cloud-init by putting it onto the disk. In general, cloud-init expects two pieces of data: meta data, providing information on the instance, and user data, which contains the actual cloud-init configuration.

For the metadata, we use a file containing only a bare minimum.

$ cat state/meta-data instance-id: iid-test-instance local-hostname: test-instance

For the metadata, we use the example from the cloud-init documentation as a starting point, which will simply set up a password for the default user.

$ cat state/user-data

#cloud-config

password: password

chpasswd: { expire: False }

ssh_pwauth: True

Now we use the genisoimage utility to create an ISO image from this data. Here is the command that we need to run.

genisoimage \ -o state/cloud-config.iso \ -joliet -rock \ -volid cidata \ state/user-data \ state/meta-data

Now open the virt-manager, shut down the machine, navigate to the machine details, and use “Add hardware” to add our new ISO image as a CDROM device. When you now restart the machine, you should be able to log in as user “ubuntu” with the password “password”.

When playing with this, there is an interesting pitfall. The cloud-init tool actually caches data on disk, and will only process a changed configuration if the instance-ID changes. So if cloud-init runs once, and you then make a change, you need to delete root volume as well to make sure that this cache is not used, otherwise you will run into interesting issues during testing.

Similar mechanisms need to be kept in mind for the network configuration. When running for the first time, cloud-init will create a network configuration in /etc/netplan. This configuration makes sure that the network is correctly reconfigured when we reboot the machine, but contains the MAC-address of the virtual network interface. So if you destroy and undefine the domain while the disk untouched, and create a new domain (with a new MAC-address) using the same disk, then the network configuration will fail because the MAC-address no longer matches.

Let us now automate the entire process using Ansible and add some additional configuration data. First, having a password is of course not the most secure approach. Alternatively, we can use the ssh_authorized_keys key in the cloud-config file which accepts a public SSH key (as a string) which will be added as an authorized key to the default user.

Next the way we have managed our ISO image is not yet ideal. In fact, when we attach the image to a virtual machine, libvirt will assume ownership of the file and later change its owner and group to “root”, so that an ordinary user cannot easily delete it. To fix this, we can create the ISO image as a volume under the control of libvirt (i.e. in our volume pool) so that we can remove or update it using virsh.

To see all this in action, clean up by removing the virtual machine and the generated ISO file in the state directory (which should work as long as your user is in the kvm group), get back to the directory into which you cloned my repository and run

cd libvirt

git checkout master

ansible-playbook site.yaml

# Wait for machine to get an IP

ip=""

while [ "$ip" == "" ]; do

sleep 5

echo "Waiting for IP..."

ip=$(virsh domifaddr \

test-instance \

| grep "ipv4" \

| awk '{print $4}' \

| sed "s/\/24//")

done

echo "Got IP $ip, waiting another 5 seconds"

sleep 5

ssh -i ./state/ssh-key ubuntu@$ip

This will run the playbook which configures and brings up the machine, wait until the machine has acquired an IP address and then SSH into it using the ubuntu user with the generated SSH key.

The playbook will also create two scripts and place them in the state directory. The first script – ssh.sh – will exactly do the same as our snippet above, i.e. figure out the IP address and SSH into the machine. The second script – cleanup.sh – removes all created objects, except the downloaded Ubuntu cloud image so that you can start over with a fresh install.

This completes our post on using Ansible with libvirt. Of course, this does not replace a more sophisticated tool like Vagrant, but for simple setups, it works nicely and avoids the additional dependency on Vagrant. I hope you had some fun – keep on automating!