In the previous posts, we have used standard Linux tools to establish and configure our network interfaces. This is nice, but becomes very difficult to manage if you need to run environments with hundreds or even thousands of machines. Open vSwitch (OVS) is an Open source software switch which can be integrated with SDN control planes and cloud management software. In this post, we will look a bit at the theoretical background of OVS, leaving the practical implementation of some examples to the next post.

Some terms from the world of software defined networks

It is likely that you have heard the magical word SDN before, and it is also quite likely that you have already found that giving a precise meaning to this term is hard. Still, there is a certain agreement that one of the core ideas of SDN is to separate data flow through your networking devices from the and networking configuration.

In a traditional data center, your network would be implemented by a large number of devices like switches and routers. Each of these devices holds some configuration and typically has a way to change that configuration remotely. Thus, the configuration is tightly integrated with the networking infrastructure, and making sure that the entire configuration is consistent and matches the desired state of your network is hard.

With sofware defined networking, you separate the configuration from the networking equipment and manage it centrally. Thus, the networking equipment handles the flow of data – and is referred to as the data plane or flow plane – while a central component called the control plane is responsible for controlling the flow of data.

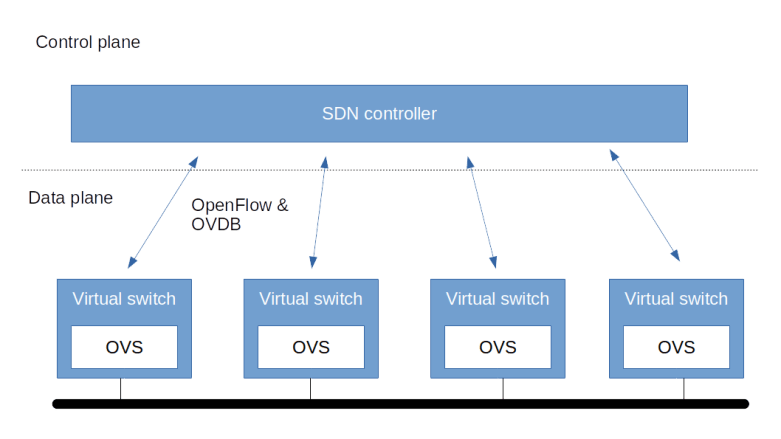

This is still a bit vague, but becomes a bit more tangible when we look at an example. Enter Open vSwitch (OVS). OVS is a software switch that turns a Linux server (which we will call a node) into a switch. Technically, OVS is a set of server processes that are installed on each node and that handle the network flow between the interfaces of the node. These nodes together make up the data plane. On top of that, there is a control plane or controller. This controller talks to the individual nodes to make sure the rules that they use to manage traffic (called the flows) are set up accordingly.

To allow controllers and switch nodes to interact, an open standard called OpenFlow has been created which defines a common way to describe flows and to exchange data between the controller and the switches. OVS supports OpenFlow (currently only version 1.1 is supported) and thus can be combined with OpenFlow based controllers like Faucet or Open Daylight, creating a layered architecture as follows. Additionally, a switch can be configured to ask the controller how to handle a packet for which no matching flow can be found.

Here, OVS uses OpenFlow to exchange flows with the controller. To exchange information on the underlying configuration of the virtual bridge (which ports are connected, how are these ports set up, …) OVS provides a second protocol called OVSDB (see below) which can also be used by the control plane to change the configuration of the virtual switch (some people would probably prefer to call the part of the control logic which handles this the management plane in contrast to the control plane, which really handles the data flow only).

Components of Open vSwitch

Let us now dig a little bit into the architecture of OVS itself. Essentially, OVS consists of three components plus a set of command-line interfaces to operate the OVS infrastructure.

First, there is the OVS virtual switch daemon ovs-vswitchd. This is a server process running on the virtual switch and is connected to a socket (usually a Unix socket, unless it needs to communicate with controllers not on the same machine). This component is responsible for actually operating the software defined switch.

Then, there is a state store, in the form of the ovsdb-server process. This process is maintaining the state that is managed by OVS, i.e. the objects like bridges, ports and interfaces that make up the virtual switch, and tables like the flow tables used by OVS. This state is usually kept in a file in JSON format in /etc/openvswitch. The OVSDB connects to the same Unix domain socket as the Switch daemon and uses it to exchange information with the Switch daemon (in the database world, the switch daemon is a client to the OVSDB database server). Other clients can connect to the OVSDB using a JSON based protocol called the OVSDB protocol (which is described in RFC 7047) to retrieve and update information.

The third main component of OVS is a Linux kernel module openvswitch. This module is now part of the official Linux kernel tree and therefore is typically pre-installed. This kernel module handles one part of the OVS data path, sometimes called the fast path. Known flows are handled entirely in kernel space. New flows are handled once in the user space part of the datapath (slow path) and then, once the flow is known, subsequently in the kernel data path.

Finally, there are various command-line interfaces, the most important one being ovs-vsctl. This utility can be used to add, modify and delete the switch components managed by OVS like bridges, port and so forth – more on this below. In fact, this utility operates by making updates to the OVSDB, which are then detected and realized by the OVS switch daemon. So the OVSDB is the leading provider of the target state of the system.

The OVS data model

To understand how OVS operates, it is instructive to look at the data model that describes the virtual switches deployed by OVS. This model is verbally described in the man pages. If you have access to a server on which OVS is installed, you can also get a JSON representation of the data model by running

ovsdb-client get-schema Open_vSwitch

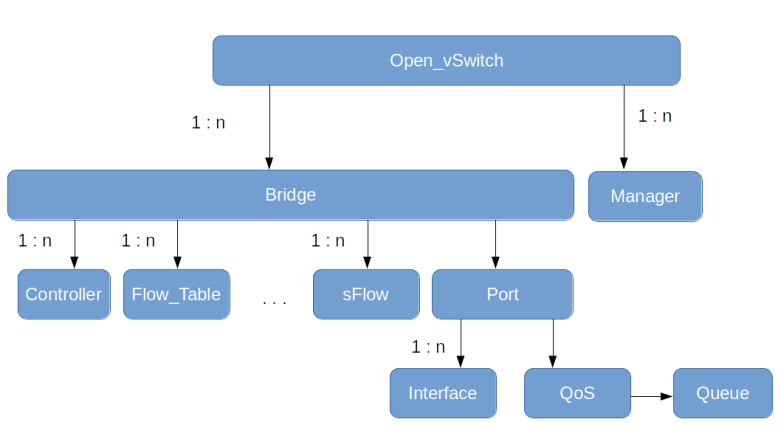

At the top level of the hierarchy, there is a table called Open_vSwitch. This table contains a set of configuration items, like the supported interface types or the version of the database being used.

Next, there are bridges. A bridge has one or more ports and is associated with a set of tables, each table representing a protocol that OVS supports to obtain flow information (for instance NetFlow or OpenFlow). Note that the Flow_Table does not contain the actual OpenFlow flow table entries, but just additional configuration items for a flow table. In addition, there are mirror ports which are used to trace and monitor the network traffic (which we ignore in the diagram below).

Each port refers to one or more interfaces. In most situations, each port has one interface, but in case of bonding, for instance, one port is supported by two interfaces. In addition, a port can be associated with QoS settings and queue for traffic control.

Finally, there are controllers and managers. A controller, in OVS terminology, is some external system which talks to OVS via OpenFlow to control the flow of packets through a bridge (and thus is associated with a bridge). A manager, on the other hand, is an external system that uses the OVSDB protocol to read and update the OVSDB. As the OVS switch daemon constantly polls this database for changes, a manager can therefore change the setup, i.e. add or remove bridges, add or remove ports and so on – like a remote version of the ovs-vsctl utility. Therefore, managers are associated with the overall OVS instance.

Installation and first steps with OVS

Before we get into the actual labs in the next post, let us see how OVS can be installed, and let us use OVS to create a simple bridge in order to get used to the command line utilities.

On an Ubuntu distribution, OVS is available as a collection of APT packages. Usually, it should be sufficient to install openvswitch-switch, which will pull in a few additional dependencies. There are similar packages for other Linux distributions.

Once the installation is complete, you should see that two new server processes are running, called (as you might expect from the previous sections) ovsdb-server and ovs-vswitchd. To try out that everything worked, you can now run the ovs-vsctl utility to display the current configuration.

$ ovs-vsctl show

518a2ed6-56d0-433f-98f2-a575daf20f72

ovs_version: "2.9.2"

The output is still very short, as we have not yet defined any objects. What it shows you is, in fact, an abbreviated version of the one and only entry in the Open_vSwitch table, which shows the unique row identifier (UUID) and the OVS version installed.

Now let us populate the database by creating a bridge, currently without any ports attached to it. Run

sudo ovs-vsctl add-br myBridge

When we now inspect the current state again using ovs-vsctl show, the output should look like this.

518a2ed6-56d0-433f-98f2-a575daf20f72

Bridge myBridge

Port myBridge

Interface myBridge

type: internal

ovs_version: "2.9.2"

Note how the output reflects the hierarchical structure of the database. There is one bridge, and attached to this bridge one port (this is the default port which is added to every bridge, similarly to a Linux bridge where creating a bridge also creates a device that has the same name as the bridge). This port has only one interface of type “internal”. If you run ifconfig -a, you will see that OVS has in fact created a Linux networking device myBridge as well. If, however, you run ethtool -i myBridge, you will find that this is not an ordinary bridge, but simply a virtual device managed by the openvswitch driver.

It is interesting to directly inspect the content of the OVSDB. You could either do this by browsing the file /etc/openvswitch/conf.db, or, a bit more conveniently, using the ovsdb-client tool.

sudo ovsdb-client dump Open_vSwitch

This will provide a nicely formatted dump of the current database content. You will see one entry in the Bridge table, representing the new bridge, and corresponding entries in the Port and Interface table.

This closes our post for today. In the next post, we will setup an example (again using Vagrant and Ansible to do all the heavy lifting) in which we connect containers on different virtual machines using OVS bridges and a VXLAN tunnel. In the meantime, you might want to take a look at the following references which I found helpful.

Click to access OpenVSwitch.pdf

https://github.com/openvswitch/ovs

https://tools.ietf.org/html/rfc7047

OVS is a great system to use, we are currently using it at work in a multi tiered nested LXD container system, where it is proving highly effective at allowing complete network separation of customer server environments. This set up is much more effective that anything VM Ware has to offer. Getting away from VM Ware is going to save my company a small fortune each year, and OVS is making it possible for us to use LXD as an effective alternative

LikeLike