In the last few posts, we have already touched on the OpenFlow protocol that plays a central role in most SDNs. Today, we will learn more on OpenFlow and again use Open vSwitch to see the protocol in action.

OpenFlow – the basics

Recall from our previous post that OpenFlow is a protocol that the control plane and the data plane of an SDN use to exchange rules that determine the flow of packets through the (virtual or physical) infrastructure. The standard is maintained by the Open Networking Foundation and is available here.

Let us first try to understand some basic terms. First, the specification describes the behavior of a switch. Logically, the switch is decomposed into two components. There is the control channel which is the part of the switch that communicates with the OpenFlow controller, and there is the datapath which consists of tables defining the flow of packets through the switch and the ports.

The switch maintains two sets of tables that are specified by OpenFlow. First, there are flow tables that contain – surprise – flows, and then, there are group tables. Let us discuss flow tables first.

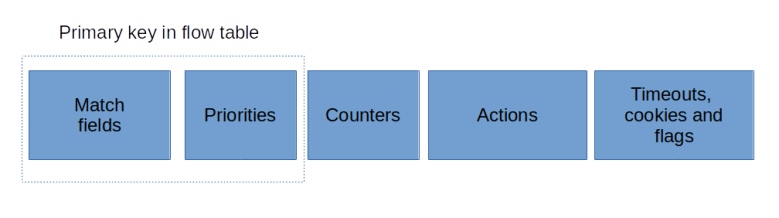

An entry in a flow table (a flow entry or flow for short) is very similar to a firewall rule in e.g. iptables. It consists of the following parts.

- A set of match fields that are used to see which flow entry applies to which Ethernet packet

- An action that is executed on a match

- A set of counters

- Priorities that apply if a packet matches more than one flow entry

- Additional control fields like timeouts, flag or cookies that are passed through

Flow tables can be chained in a pipeline. When a packet comes in, the flow tables in the pipeline are processed in sequence. Depending on the action of a matching flow table entry, a packet can then be sent directly to an outgoing port, or be forwarded to the next table in the pipeline for further processing (using the goto-table action). Optionally, a pipeline can be divided into three parts – ingress tables, group tables (more on this later) and egress tables.

A table typically contains one entry with priority zero (i.e. the lowest priority) and no match fields. As non-existing match fields are considered as wildcards, this flow matches all packets that are not consumed by other, more specific flows. Therefore, this entry is called the table-miss entry and determines how packets with no other matching role are handled. Often, the action associated with this entry is to forward the packet to a controller to handle it. If not even a table-miss entry exists in the first table, the packet is dropped.

While the packet traverses the pipeline, an action set is maintained. Each flow entry can add an action to the set or remove an action or run a specific action immediately. If a flow entry does not forward the packet to the next table, all actions which are present in the action set will be executed.

The exact set of actions depends on the implementation as there are many optional actions in the specification. Typical actions are forwarding a packet to another table, sending a packet to an output port, adding or removing VLAN tags, or setting specific fields in the packet headers.

In addition to flow tables, an OpenFlow compliant switch also maintains a group table. An entry in the group table is a bit like a subroutine, it does not contain any matching criteria, but packets can be forwarded to a group by a flow entry. A group contains one or more buckets each of which in turn contains a set of actions. When a packet is processed by a group table entry, a copy will be created for each bucket, and to each copy the actions in the respective bucket will be applied. Group tables have been added with version 1.1 of the specification.

Lab13: seeing OpenFlow in action

After all this theory, it is time to see OpenFlow in action. For that purpose, we will use the setup in lab11, as shown below.

Let us bring up this scenario again.

git clone https://github.com/christianb93/networking-samples cd networking-samples/lab11 vagrant up

OVS comes with an OpenFlow client ovs-ofctl that we can use to inspect and change the flows. Let us use this to display the initial content of the flow tables. On boxA, run

sudo ovs-vsctl set bridge myBridge protocols=OpenFlow14 sudo ovs-ofctl -O OpenFlow 14 show myBridge

The first command instructs the bridge to use version 1.4 of the OpenFlow protocol (by default, an OVS bridge still uses the rather outdated version 1.0). The second command asks the CLI to provide some information on the bridge itself and the ports. Note that the bridge has a datapath id (dpid) which is identical to the datapath ID stored in the Bridge OVSDB table (use ovs-vsctl list Bridge to verify this). For this and for all commands, we use the switch -O to make sure that the client uses version 1.4 of the protocol as well. As an example, here is the command to display the flow table.

sudo ovs-ofctl -O OpenFlow14 dump-flows myBridge

This should create only one line of output, describing a flow in table 0 (the first table in the pipeline) with priority 0 and no match fields. This is the table-miss rule mentioned above. The associated action NORMAL specifies that the normal switch processing should take place, i.e. the device should operate like an ordinary switch without involving OpenFlow.

Now let us generate some traffic. Open an SSH connection to to box B and execute

sudo docker exec -it web3 /bin/bash ping -i 1 -c 10 172.16.0.1

to create 10 ICMP packets. If we now take another look at the OpenFlow table, we should see that the counter n_packets has changed and should now be 24 (10 ICMP requests, 10 ICMP replies, 2 ARP requests and 2 ARP replies).

Next, we will add a flow which will drop all traffic with TCP target port 80 coming in from the container web3. Here is the command to do this.

sudo ovs-ofctl \

-O OpenFlow14 \

add-flow \

myBridge \

'table=0,priority=1000,

eth_type=0x0800,ip_proto=6,tcp_dst=80,action='

The syntax of the match fields and the rules is a bit involved and described in more detail in the man-pages for ovs-actions and ovs-fields. Note that we do not specify an action, which implies that the packet will be dropped. When you display the flow tables again, you will see the additional rule being added.

Now head over into the terminal connected to the container web3 and try to curl web1. You should see an error message from curl telling you that the destination could not be reached. If you dump the flows once more, you will find that the statistic of our newly added rule have changed and the counter n_packets is now 2. If we delete the flow again using

sudo ovs-ofctl \

-O OpenFlow14 \

del-flows \

myBridge \

'table=0,eth_type=0x0800,ip_proto=6,tcp_dst=80'

and repeat the curl, it should work again.

This was of course a simple setup, with only one table and no group tables. Much more complex processing can be realized with OpenFlow, and we refer to the OVS tutorials to see some examples in action.

One final note which might be useful when it comes to debugging. OVS is – as many switches – not a pure OpenFlow switch, but in addition to the OpenFlow tables maintains another set of rules called the datapath flow. Sometimes, this causes confusion when the observed results do not seem to match the existing OpenFlow table entries. These additional flows can be dumped using the ovs-appctl tool, see the Open vSwitch FAQs for details.

1 Comment