A couple of years back, when I first looked into Docker in more detail, I put together a few pages on how Docker is utilizing some Linux kernel technologies to realize process isolation. Recently I have been using Docker again, so I thought it would be a good point in time to dig out some of that and create two or maybe three posts on some Docker internals. Lets get started….

Container versus virtual machines

You probably have seen the image below or a similar image before, but for the sake of completeness let us quickly recap what the main difference between a container like Docker and a virtual machine is.

On the left hand side, we see a typical stack when full virtualization is used. The exact setup will depend on the virtualization model that is being used, but in many cases (like running VirtualBox on Linux), you will have the actual hardware, a host operating system like Linux that consists of the OS kernel and on top of that a file system, libraries, configuration files etc. On these layers, the virtual machine is executing as an application. Inside the virtual machine, the guest OS is running. This could again be Linux, but could be a different distribution, a different kernel or even a completely different operating system. Inside each virtual machine, we then again have an operating kernel system kernel, all required libraries and finally the applications.

This is great for many purposes, but also introduces some overhead. If you decide to slice and dice your applications into small units like microservices, your actual applications can be rather small. However, for every application, you still need the overhead of a full operating system. In addition, a full virtualization will typically also consume a few resources on the host system. So full virtualization might not always be the perfect solution.

Enter containers. In a container solution, there is only one kernel – in fact, all containers and the applications running in them use the same kernel, namely the kernel of the host OS. At least logically, however, they all have their own root file system, libraries and so on. Thus containers still have the benefit of a certain isolation, i.e. different applications running in different container are still isolated on the file system level, can use networking resources like ports and sockets without conflicting and so forth, while reducing the overhead by sharing the kernel. This makes containers a good choice if you can live with the fact that all applications run one one OS and kernel version.

But how exactly does the isolation work? How does a container create the illusion for a process running inside it that it is the exclusive user of the host operating system? It turns out that Docker uses some technologies built into the Linux kernel to do this. Let us take a closer look at those core technologies one by one.

Core technologies

Let us start with namespaces. If you know one or more programming languages, you have probably heard that term before – variables and other objects are assigned to namespaces so that a module can use a variable x without interfering with a variable of the same name in a different module. So namespaces are about isolation, and that is also their role in the container world.

Let us look at an example to understand this. Suppose you want to run a web server inside a container. Most web servers will try to bind to port 80 on startup. Now at any point in time, only one application can listen on that port (with the same IP address). So if you start two containers that both run a web server, you need a mechanism to make sure that both web servers can – inside their respective container – bind to port 80 without a conflict.

A similar issue exists for process IDs. In the “good old days”, a Linux process was uniquely identified by its process ID, so there was exactly one process with ID 1 – usually the init process. ID 1 is a bit special, for instance when it comes to signal handling, and usually different copies of the user space OS running in different containers will all try to start their own init process and might rely on it having process ID one. So again, there is a clash of resources and we need some magic to separate them between containers.

That is exactly what Linux namespaces can do for you. Citing from the man page,

A namespace wraps a global system resource in an abstraction that makes it appear to the processes within the namespace that they have their own isolated instance of the global resource.

In fact, Linux offers namespaces for different types of resources – networking resources, but also mount points, process trees or the good old System V inter-process communication devices. Individual processes can join a namespace or leave a namespace. If you spawn a new process using the clone system call, you can either ask the kernel to assign the new process to the same namespaces as the parent process, or you can create new namespaces for the child process.

Linux exposes the existing namespaces as symbolic links in the directory /proc/XXXX/ns, where XXXX is the process id of the respective process. Let us try this out. In a terminal, enter (recall that $$ expands to the PID of the current process)

$ ls -l /proc/$$/ns total 0 lrwxrwxrwx 1 chr chr 0 Apr 9 09:36 cgroup -> cgroup:[4026531835] lrwxrwxrwx 1 chr chr 0 Apr 9 09:36 ipc -> ipc:[4026531839] lrwxrwxrwx 1 chr chr 0 Apr 9 09:36 mnt -> mnt:[4026531840] lrwxrwxrwx 1 chr chr 0 Apr 9 09:36 net -> net:[4026531957] lrwxrwxrwx 1 chr chr 0 Apr 9 09:36 pid -> pid:[4026531836] lrwxrwxrwx 1 chr chr 0 Apr 9 09:36 user -> user:[4026531837] lrwxrwxrwx 1 chr chr 0 Apr 9 09:36 uts -> uts:[4026531838]

Here you see that each namespace to which the process is assigned is represented by a symbolic link in this directory (you could use ls -Li to resolve the link and display the actual inode to which it is pointing). If you compare this with the content of /proc/1/ns (you will need to be root to do this), you will probably find that the inodes are the same, so your shell lives in the same namespace as the init process. We will later see how this changes when you run a shell inside a container.

Namespaces already provide a great deal of isolation. There is one namespace, the mount namespace, which even allows different processes to mount different volumes as root directory, and we will later see how Docker uses this to realize file system isolation. Now, if every container really had its own, fully, independent root file system, this would again introduce a high overhead. If, for instance, you run two containers that both use Ubuntu Linux 16.04, a large part of the root file system will be identical and therefore duplicated.

To be more efficient, Docker therefore uses a so called layered file system or union mount. The idea behind this is to merge different volumes into one logical view. Suppose, for instance, that you have a volume containing a file /fileA and another volume containing a file /fileB. With a traditional mount, you could mount any of these volumes and would then see either file A or file B. With a union mount, you can mount both volumes and would see both files.

That sounds easy, but is in fact quite complex. What happens, for instance, if both volumes contain a file called /fileA? To make this work, you have to add layers, where each layer will overlay files that already exist in lower layers. So your mounts will start to form a stack of layers, and it turns out that this is exactly what we need to efficiently store container images.

To understand this, let us again look at an example. Suppose you run two containers which are both based on the same image. What then essentially happens is that Docker will create two union mounts for you. The lowest layer in both mounts will be identical – it will simply be the common image. The second layer, however, is specific to the respective container. When you now add or modify a file in one container, this operation changes only the layer specific to this container. The files which are not modified in any of the containers continue to be stored in the common base layer. Thus, unless you execute heavy write operations in the container, the specific layers will be comparatively small, reducing the overhead greatly. We will see this in action soon.

Finally, the last ingredient that we need are control groups, abbreviated as cgroups. Essentially, cgroups provide a way to organize Linux processes into hierarchies in order to manage resource limits. Being hierarchies, cgrous are again exposed as part of the file system. On my machine, this looks as follows.

chr:~$ ls /sys/fs/cgroup/ blkio cpu cpuacct cpu,cpuacct cpuset devices freezer hugetlb memory net_cls net_cls,net_prio net_prio perf_event pids systemd

We can see that there are several directories, each representing a specific type of resources that we might want to manage. Each of these directories can contain an entire file system tree, where each directory represents a node in the hierarchy. Processed can be assigned to a node by adding their process ID to the file tasks that will find in each of these nodes. Again, the man page turns out to be a helpful resource and explains the meaning of the different entries in the /sys/fs/cgroup directories.

Practice

Let us now see how all this works in practice. For that purpose, let us open two terminals. In one of the terminals – let me call this the container terminal – start a container running the Alpine distribution using

docker run --rm -it alpine

In the second window which I will call the host window, we can now use ps -axf to inspect the process tree and then look at the directories in /proc to browse the namespaces.

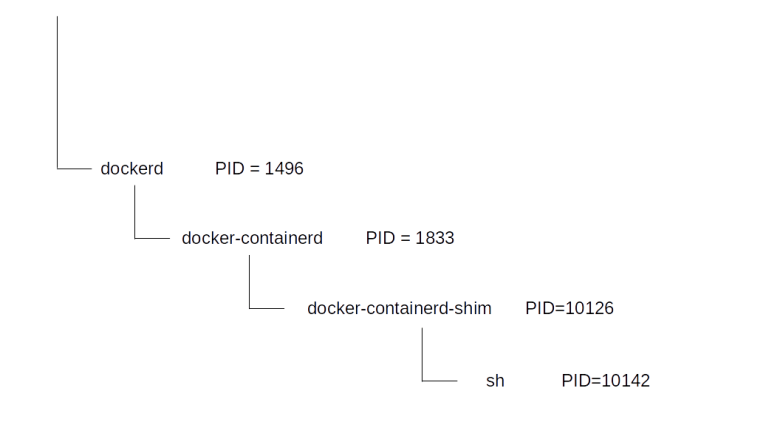

What you will find is that there are three processes involved. First, there is the docker daemon itself, called dockerd. In my case, this process has PID 1496. Then, there is a child process called docker-containerd, which is the actual container runtime within the Docker architecture stack. This process in turn calls a process called docker-containerd-shim (PID 10126) which then spawns the shell (PID 10142 in my case) inside the container.

Now let us inspect the namespaces associated with these processes first. We start with the shell itself.

$ sudo ls -Lil /proc/10142/ns total 0 4026531835 -r--r--r-- 1 root root 0 Apr 9 11:20 cgroup 4026532642 -r--r--r-- 1 root root 0 Apr 9 11:20 ipc 4026532640 -r--r--r-- 1 root root 0 Apr 9 11:20 mnt 4026532645 -r--r--r-- 1 root root 0 Apr 9 10:48 net 4026532643 -r--r--r-- 1 root root 0 Apr 9 11:20 pid 4026531837 -r--r--r-- 1 root root 0 Apr 9 11:20 user 4026532641 -r--r--r-- 1 root root 0 Apr 9 11:20 uts

Let us now compare this with the namespaces to which the containerd-shim process is assigned.

$ sudo ls -Lil /proc/10126/ns total 0 4026531835 -r--r--r-- 1 root root 0 Apr 9 11:21 cgroup 4026531839 -r--r--r-- 1 root root 0 Apr 9 11:21 ipc 4026531840 -r--r--r-- 1 root root 0 Apr 9 11:21 mnt 4026531957 -r--r--r-- 1 root root 0 Apr 9 08:49 net 4026531836 -r--r--r-- 1 root root 0 Apr 9 11:21 pid 4026531837 -r--r--r-- 1 root root 0 Apr 9 11:21 user 4026531838 -r--r--r-- 1 root root 0 Apr 9 11:21 uts

We see that Docker did in fact create new namespaces for almost all possible namespaces (ipc, mnt, net, pid, uts).

Next, let us compare mount points. Inside the cointainer, run mount to see the existing mount points. Usually at the very top of the output, you should see the mount for the root filesystem. In my case, this was

none on / type aufs (rw,relatime,si=e92adf256343919e,dio,dirperm1)

Running the same command on the host system, I got a line like

none on /var/lib/docker/aufs/mnt/a9c5d26a45307d4e168b3936bd65d301c8dd039336083a324ed1a0b7c2bd0c52 type aufs (rw,relatime,si=e92adf256343919e,dio,dirperm1)

The identical si attribute tells you that this is fact the same mount. You also verify this directly. Inside the container, create a file test using touch test. If you then use ls to display the contents of the mount point as seen on the host, you should actually see this file. So the host process and the process inside the container see different mount points – made possible by the namespace technology! You can now access the files from inside the container or from outside the container without having to use docker exec (though I am note sure I would recommend this).

If you want, you can even trace the individual layers of this file system on the host system by using ls /sys/fs/aufs/si_e92adf256343919e/ and printing out the contents of the various files that you will find there – you will find that there are in fact two layers, one of them being a read-only layer and the second on top being a read-write layer.

ls /sys/fs/aufs/si_e92adf256343919e/ br0 br1 br2 brid0 brid1 brid2 xi_path root:~# cat /sys/fs/aufs/si_e92adf256343919e/xi_path /dev/shm/aufs.xino root:~# cat /sys/fs/aufs/si_e92adf256343919e/br0 /var/lib/docker/aufs/diff/a9c5d26a45307d4e168b3936bd65d301c8dd039336083a324ed1a0b7c2bd0c52=rw root:~# cat /sys/fs/aufs/si_e92adf256343919e/br1 /var/lib/docker/aufs/diff/a9c5d26a45307d4e168b3936bd65d301c8dd039336083a324ed1a0b7c2bd0c52-init=ro+wh

You can even “enter the container” using the nsenter Linux command to manually attach to defined namespaces of a process. To see this, enter

$ sudo nsenter -t 10142 -m -p -u "/bin/sh" / # ls bin dev etc home lib media mnt proc root run sbin srv sys test tmp usr var / #

in a host terminal. This will attach to the mount, PID and user namespaces of the target process specified via the -t parameter, in our case this is the PID of the shell inside the container, and run the specified command /bin/sh. As a result, you will now see the file test created inside the container and see the same filesystem that is also visible inside the container.

Finally, let us take a look at the cgroups docker has created for this container. The easiest way to find them is to search for the first few characters of the container ID that you can figure out using docker ps (I have cut off some lines at the end of the output).

$ docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 7c0f142bfbfd alpine "/bin/sh" About an hour ago Up About an hour quizzical_jones $ find /sys/fs/cgroup/ -name "*7c0f142bfbfd*" /sys/fs/cgroup/pids/docker/7c0f142bfbfdb9320166f92d17ecbf9d462e9c234916632f23ec1b454fb6eb52 /sys/fs/cgroup/pids/system.slice/var-lib-docker-containers-7c0f142bfbfdb9320166f92d17ecbf9d462e9c234916632f23ec1b454fb6eb52-shm.mount /sys/fs/cgroup/freezer/docker/7c0f142bfbfdb9320166f92d17ecbf9d462e9c234916632f23ec1b454fb6eb52 /sys/fs/cgroup/net_cls,net_prio/docker/7c0f142bfbfdb9320166f92d17ecbf9d462e9c234916632f23ec1b454fb6eb52 /sys/fs/cgroup/cpuset/docker/7c0f142bfbfdb9320166f92d17ecbf9d462e9c234916632f23ec1b454fb6eb52

If you now inspect the task files in each of the newly created directories, you will find the PID of the container shell as seen from the root namespace, i.e. 10142 in this case.

This closes this post which is already a bit lengthy. We have seen how Docker uses union file systems, namespaces and cgroups to manage and isolate container and how we can link the resources as seen from within the container to resources on the host system. In the next posts, we will look in more detail at networking in Docker. Until then, you might want to consult the followings links that contain additional material.

- J. Pettazone has put slides from a talk online

- The overview from the official Docker documentation briefly explains the core technologies

- There are of course already many excellent blog posts on Docker internals, like the one by Julia Evans

- The post from the Docker Saigon community

In case you are interested in container platforms in general, you also might want to take a look at my series on Kubernetes that I have just started.

3 Comments